SLOs, error budgets, and how to make reliability a real product feature

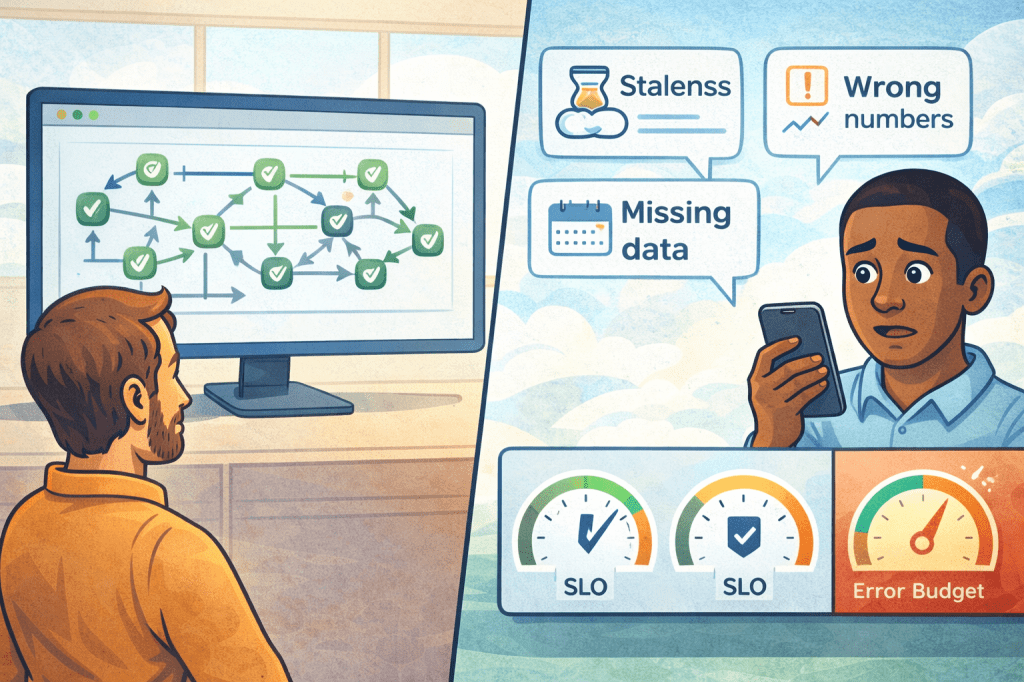

There’s a moment every Data Product Owner experiences sooner or later.

You look at the monitoring dashboard and everything is green. Jobs succeeded. No alerts. The orchestrator is happy.

And then a message arrives from a consumer:

“Numbers look wrong.”

“Dashboard is stale.”

“Why is yesterday missing?”

“We can’t trust this anymore.”

That moment is painful, but it’s also incredibly clarifying. It teaches the most important reliability lesson in “data as a product”:

A pipeline is not your product.

The consumer experience is your product.

Story 5 is about turning that insight into a system: product SLOs and error budgets. Not as bureaucracy, but as the simplest way to make reliability predictable, negotiable, and ultimately scalable.

Why “job success rate” is a trap

Pipeline monitoring is necessary, but it’s not sufficient.

A pipeline can succeed and still deliver a bad product experience when:

- the data arrives late but the job still ran

- the job processed incomplete input

- upstream changed meaning and you propagated it cleanly (but incorrectly)

- a backfill recalculated history and altered KPIs silently

- the output is technically updated, but not within the decision window consumers need

So if your reliability definition is “the job ran,” you will keep losing trust even while being operationally “successful.”

The fix starts with a different question.

Instead of “Did the pipeline succeed?” ask:

“Did we meet the consumer promise?”

That promise is your SLO.

The mindset shift: from “availability of pipelines” to “reliability of products”

A product SLO is not a fancy metric. It’s simply the measurable version of what you already say informally:

- “This dataset is updated every morning.”

- “This KPI is stable and decision-grade.”

- “This output is safe to automate actions on.”

SLOs make those statements concrete and testable.

The trick is to define SLOs that match how the product is actually used, not how you’d like it to be used.

The three SLOs that cover 80% of real-world pain

Most data products don’t need 20 metrics. They need a small set that maps directly to consumer frustration.

Here’s a pragmatic starter set:

- Freshness SLO

This is the most common trust breaker. Define it as a window, not a timestamp.

Good: “Updated by 08:30 CET on business days”

Better: “Fresh within 3 hours of source close”

Best: “Fresh within the decision window needed by the consumer”

- Completeness SLO

Completeness is often more important than speed, especially for Gold.

Examples:

- “At least 99% of expected records are present”

- “All mandatory business keys populated above 99.9%”

- “No missing partitions for the reporting period”

- Validity / Consistency SLO

This is where “numbers look wrong” lives.

Examples:

- “No duplicate business keys beyond threshold”

- “Reference codes must be in approved set”

- “Reconciliation check within tolerance compared to upstream totals”

- “KPI invariants hold (e.g., sums, monotonicity, balanced deltas)”

If you implement only these three consistently, you’ll prevent most reliability dramas.

Align SLOs with medallion maturity (this matters)

In Story 3 we talked about contract promises per medallion. Reliability should follow the same logic.

You don’t need the same SLO strictness everywhere.

A simple mapping that works well:

Bronze

- SLO focus: ingestion availability, replayability, source coverage

- Tolerance: higher variance is okay if it’s transparent

- Most important: traceability and “what happened?”

Silver

- SLO focus: consistency, key stability, dedup rules, standardized formats

- Tolerance: moderate; changes are expected but must be controlled

- Most important: predictable structure and hygiene

Gold

- SLO focus: semantic stability, freshness in decision windows, high completeness

- Tolerance: low; surprises are expensive

- Most important: consumer trust and stable meaning

This alone removes a lot of conflict because you stop treating all layers like they have the same consumer expectation.

Error budgets: the part that changes behavior

If SLOs define reliability, error budgets define how you manage it.

An error budget is simply:

how much unreliability you allow in a period without triggering corrective action.

It’s the mechanism that keeps teams from living in two extreme modes:

- “Ship everything fast, we’ll fix later”

- “Freeze everything, we’re scared to touch it”

Error budgets create a third mode: controlled change.

How to explain error budgets in one sentence

“If we spend the budget, we pause feature work and invest in reliability until trust is restored.”

That’s it. That’s the deal.

And it works because it changes incentives.

A practical reliability playbook for DPOs (structured and usable)

Here’s a simple operating model you can run without building a bureaucracy.

Define for each Gold output port (start small, don’t do everything at once):

- Freshness SLO window

- Completeness threshold

- One or two validity checks that protect meaning

- Severity mapping: what happens if breached

Then run a weekly 20-minute reliability review:

- Which SLOs were breached?

- Did we spend error budget?

- What are the top 1–2 root causes?

- What are the next 1–2 fixes that remove recurring pain?

This is not a reporting ceremony. It’s a product steering mechanism.

The consumer communication pattern that builds trust fast

Reliability isn’t only technical; it’s also expectation management.

When a breach happens, consumers mainly need three things:

- acknowledgement

- impact scope

- next action

A simple message template (short, consistent) is worth gold:

- What happened (freshness/completeness/validity)

- Who is impacted (which dashboards / consumers / downstream products)

- What to do now (use yesterday’s snapshot, switch to vX, avoid decisions until update)

- When next update will come

The consistency matters more than perfect wording. Consumers trust teams that communicate predictably.

The four common traps (and how to avoid them)

To keep this real, here are the pitfalls I see again and again:

- Defining SLOs that are too strict

If you can’t meet it, it becomes a lie. Start achievable, tighten over time. - Measuring everything, acting on nothing

A dashboard full of metrics without a response policy creates cynicism. - Treating error budgets as blame

Error budgets are not a stick; they’re a steering wheel. - Confusing “data quality” with “product reliability”

Quality is part of reliability, but reliability is about consumer experience across freshness, completeness, and meaning.

The “minimum viable reliability” starter kit

If you want a concrete starting point, here’s what I’d implement for one Gold product within a week:

- One freshness SLO tied to the decision window

- One completeness check (missing partitions or expected volume)

- One semantic guardrail (a reconciliation or invariant)

- One incident message template

- One weekly reliability review

You’ll be surprised how quickly this improves trust.

Next episode: observability that makes SLOs measurable and affordable

In Story 6 we switch perspective again.

Because once you define SLOs and error budgets as a product owner, the platform question becomes unavoidable:

How do we standardize signals across products, compute blast radius, route incidents to owners, and do it without drowning in telemetry cost?

That’s observability that scales, and it’s the missing piece that makes SLOs operational instead of aspirational.

Leave a comment