How platform engineers make data contracts executable (not ceremonial)

If you’re serious about “data as a product,” you eventually hit a hard truth: a contract that isn’t enforced is not a contract. It’s an intention. And intentions don’t survive scale.

In Story 3 we talked about what Data Product Owners should promise per medallion and how versioning builds trust. Story 4 is the platform engineer’s counterpart: how do we make those promises real, repeatable, and boringly reliable?

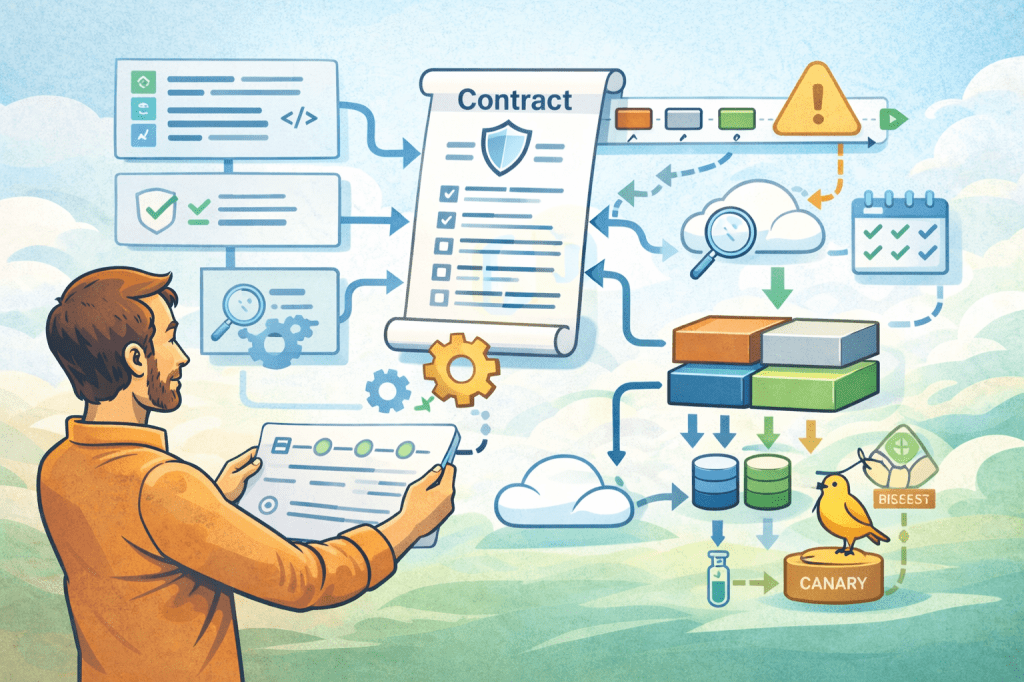

The answer is contract-as-code. Not as a buzzword, but as a concrete operating model where contracts are defined in a machine-checkable format, validated automatically before release, continuously monitored at runtime, and rolled out with safety patterns that prevent consumer breakage.

This is the post I wish I had when we moved from “nice contract templates” to “contracts that actually protect consumers.”

Why contracts fail in real life (even when everyone agrees)

Most organizations don’t fail because they don’t know what a good contract looks like. They fail because contracts degrade under pressure.

A team needs to deliver quickly, so they “temporarily” skip contract updates. A schema evolves in an upstream system and the change is pushed through because “it’s just one column.” A bug fix requires a backfill and suddenly downstream numbers move. Nobody wanted to break anything, but the system allowed it, and the platform had no mechanism to stop it.

That’s the crucial platform lesson: you can’t rely on discipline as your primary control. You need technical enforcement that makes unsafe changes hard, visible, and ideally impossible.

The contract control plane: three checkpoints that matter

A practical contract-as-code approach has three layers of enforcement. Each layer catches a different category of failure, and together they turn contracts into an executable boundary.

First, design-time validation. This happens when a contract is authored or changed, typically in a pull request workflow. Second, publish-time gates. This happens when something becomes “live” for consumers. Third, runtime drift detection. This happens when reality diverges from what was promised, because production always finds a way to surprise you.

Let’s go through each layer with the checks that are worth implementing.

Design-time validation (PR gates): stop bad contracts before they ship

This is where you get the highest ROI because the feedback loop is tight and the cost of change is low.

A good PR gate validates four things.

One, contract completeness. You’d be surprised how often contracts degrade into schema-only artifacts over time. Your CI should fail if required fields are missing: owner, purpose, medallion, exposure ports, quality checks, freshness promise, and versioning metadata. The exact fields will differ by org, but the principle stands: if the contract is incomplete, the product is not releasable.

Two, compatibility checks. This is where you codify breaking vs non-breaking changes. If someone removes a column, changes a type, changes a key, changes grain, or alters semantics in a way that can break consumers, the gate should detect it. You can implement this in tiers. Start with schema compatibility and later add semantic checks where you can. The key is to make breaking changes explicit and versioned, not accidental.

Three, policy compliance. This connects directly to Story 2. If your platform has rules like “Gold may not consume Bronze” or “This product type may not expose that port,” enforce them here. The contract should declare upstream subscriptions and output ports, and the gate should validate them against your policy matrix. This prevents whole categories of DAG chaos before it starts.

Four, data product identity invariants. This sounds boring, but it’s what prevents name drift and metadata spaghetti. Validate naming conventions, ensure stable IDs, ensure version increments follow your rules. If you can’t reliably identify a product across time, everything downstream becomes harder: lineage, incidents, audits, deprecations, and cost attribution.

A simple but powerful mindset is to treat contracts like an API spec. You wouldn’t merge an API change without linting, compatibility checks, and required metadata. Data products deserve the same respect.

Publish-time gates: protect consumers from “oops, it’s live”

Even with PR gates, publish is where things can go wrong. Artifacts get deployed out of sequence. Someone bypasses a branch. An environment-specific setting changes. Or a release includes multiple products whose combined graph creates a new risk.

Publish-time gates focus on what PR validation can’t fully prove: the health of the release as a whole.

Start with graph validation. Before you activate subscriptions and triggers, validate the dependency graph: no cycles, acceptable depth, acceptable fan-out, and compliance with allowed upstream rules. If your platform supports event-driven triggering, this is where you block the kinds of cascades that turn one upstream refresh into a downstream stampede.

Then validate contract-to-runtime linkage. If a contract says an output port exists at a certain location, verify the artifact is actually there, accessible, and aligned. This sounds obvious, but it’s where “contract says X, storage says Y” failures happen, especially when teams restructure paths or rename objects.

Add a release readiness check for SLO-critical products. For Gold outputs, you often want stricter gating. For example, you might require that quality checks have been executed successfully in the target environment, that freshness windows are achievable given upstream schedules, and that the “breaking change path” has an active parallel version if needed.

Finally, publish-time is where you stamp an audit record. This matters more than people think. It’s not just compliance. It’s operational clarity. When an incident happens, you want to know exactly what changed, when, by whom, and which policies were evaluated at the time.

The goal is simple: consumers should never be surprised by a change that slipped through a deployment crack.

Runtime drift detection: when production diverges from the contract

Even with strong gates, runtime reality will differ from expectations. A vendor system changes without notice. A producer starts sending nulls in a field that used to be complete. A timestamp shifts. A backfill changes historical data. Or an upstream pipeline quietly degrades and freshness begins to slip.

Contract-as-code shines when contracts become a baseline for drift detection. You’re no longer just monitoring pipelines. You’re monitoring whether the product still matches the promise.

There are three categories of drift worth detecting.

Schema drift. Columns appear or disappear, types change, nullable becomes non-nullable in practice, and so on. Schema drift can often be detected automatically and early.

Quality drift. Completeness drops, duplicates spike, invalid codes appear, distributions shift. This is where you connect contract-specified checks to runtime measurements. The contract doesn’t have to list a thousand checks. It should list the checks that matter, and the platform should run them consistently.

Semantic drift. This is the hardest, but also the most valuable at the Gold layer. It’s where definitions and meaning shift even though the schema stays the same. You won’t fully automate semantic drift detection early, but you can approximate it with golden metrics, reconciliation checks, and “known invariants” that should hold over time. Even a small set of semantic checks will catch the most expensive failures.

The best runtime drift systems do two extra things. They compute blast radius and they route to owners automatically.

Blast radius means: if this output port is drifting, which downstream products and consumers are impacted? This turns alerts into actionable context. Routing means: the alert goes to the correct owning team, with the contract and the last known good version attached.

Without those two, you’ll build a very expensive alert generator that nobody trusts.

Safe rollout patterns: how to change without breaking everyone

Contracts reduce risk, but they don’t eliminate change. Your platform still needs patterns that make change survivable.

The most useful patterns are boring, repeatable, and require minimal heroics.

Parallel versioned ports. For breaking changes, publish a new versioned output port while keeping the old one stable for a defined deprecation window. Consumers migrate on their timeline, with support, and you avoid “flag day” failures. This is the data-product equivalent of versioned APIs.

Dual publishing with reconciliation. When you introduce a new transformation logic, run old and new paths in parallel for a period, and reconcile outputs. This gives you confidence and catches semantic mistakes before consumers experience them.

Canary consumers or canary workloads. For large changes, direct a subset of consumers or a subset of workload to the new version first. In practice, this could be an internal dashboard, a single domain, or a small cohort of analytic workloads. Canary patterns are less common in data than in application engineering, but they’re incredibly effective when you can implement them.

Feature toggles for triggers. In event-driven or orchestrated systems, a change is often dangerous because it changes triggering behavior and refresh cascades. Make triggers explicitly activatable. Default to “off” for new downstream subscriptions until a consumer opts in. This single design choice prevents many accidental cascades.

Time-boxed exceptions. Sometimes the business needs a shortcut. Your platform should support exceptions, but only in a controlled way: explicit rationale, named owner, expiry date, and audit record. Then the platform should enforce the expiry. Exceptions are fine. Permanent exceptions are technical debt with a suit on.

All of these patterns share one idea: consumers should get to choose when they adopt breaking change, and the platform should make that choice safe.

The minimal path to implement contract-as-code without boiling the ocean

If you’re building this from scratch, it’s easy to overdesign. The simplest progressive approach looks like this.

Start by storing contracts in a repository and adding a linter. Enforce completeness and naming invariants. This is cheap and immediately improves hygiene.

Next, implement schema compatibility checks for output ports. Treat breaking changes as a different path, not as a forbidden act. Force a version bump and a parallel output.

Then connect contracts to policy compliance. Validate allowed output ports and allowed upstream subscriptions. This ties contract-as-code to the orchestration guardrails from Story 2.

After that, add runtime checks for schema drift and a small set of quality checks. Don’t start with everything. Start with the checks that match your biggest incident classes.

Finally, add blast radius computation and owner routing. This is where the system becomes operationally mature and starts to reduce incident time dramatically.

The goal isn’t perfection. The goal is to turn contracts into an integrated part of the platform lifecycle, so they can’t drift into irrelevance.

What “done” looks like for contract-as-code

You’ll know you’re succeeding when the day-to-day experience changes in a specific way.

Breaking changes don’t feel like emergencies anymore. They become planned, versioned releases.

Incidents don’t start with “what changed?” because the audit record tells you.

Teams stop arguing about what Gold should promise, because the contract enforces it and the platform monitors it.

Consumers stop fearing updates, because they have predictable migration paths.

That’s the real payoff. Contract-as-code is not about control. It’s about confidence.

Next episode: reliability and SLOs as a product feature

In Story 5 we switch back to the Data Product Owner perspective and talk about reliability as a feature: SLOs, error budgets, and how to stop the never-ending firefight where pipelines are green but the product experience is failing.

Leave a comment